Software Team

Good vibes.

Our Process

The technical documentation is below - this is how we got to the state documented there! It is an incomplete list because this was a massive effort and it's impossible to reproduce every step here. We're doing our best to capture the important parts!

The goal is to write the software for an icosphere that can move forward by taking multiple steps in a given direction (orientation via IMU, 20 servos to actuate the sides of the icosphere). It should also play audio.

Tuesday, 11th

Matti and Sun met in the evening to figure out bi-directional communication between a browser page to act as the brain of the project and the ESP32 microcontroller. They're opting for a UDP connection and build a first prototype that establishes a connection.

The project is initialized and we're rolling! Well, not yet, but we're getting there.

Wednesday, 12th

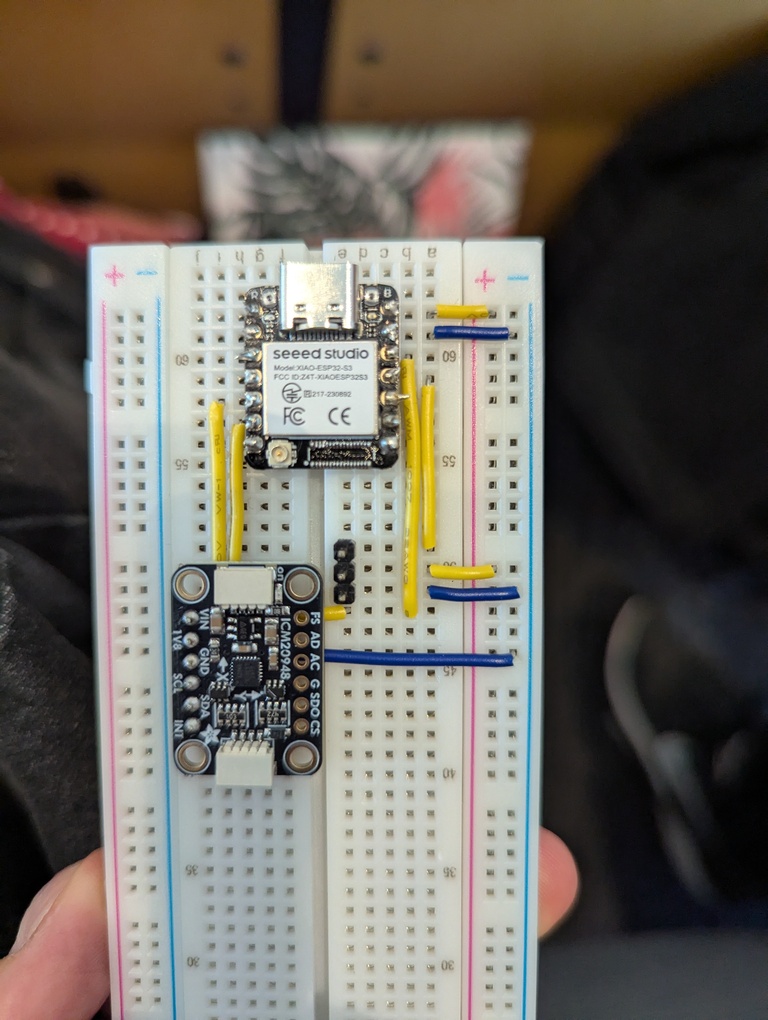

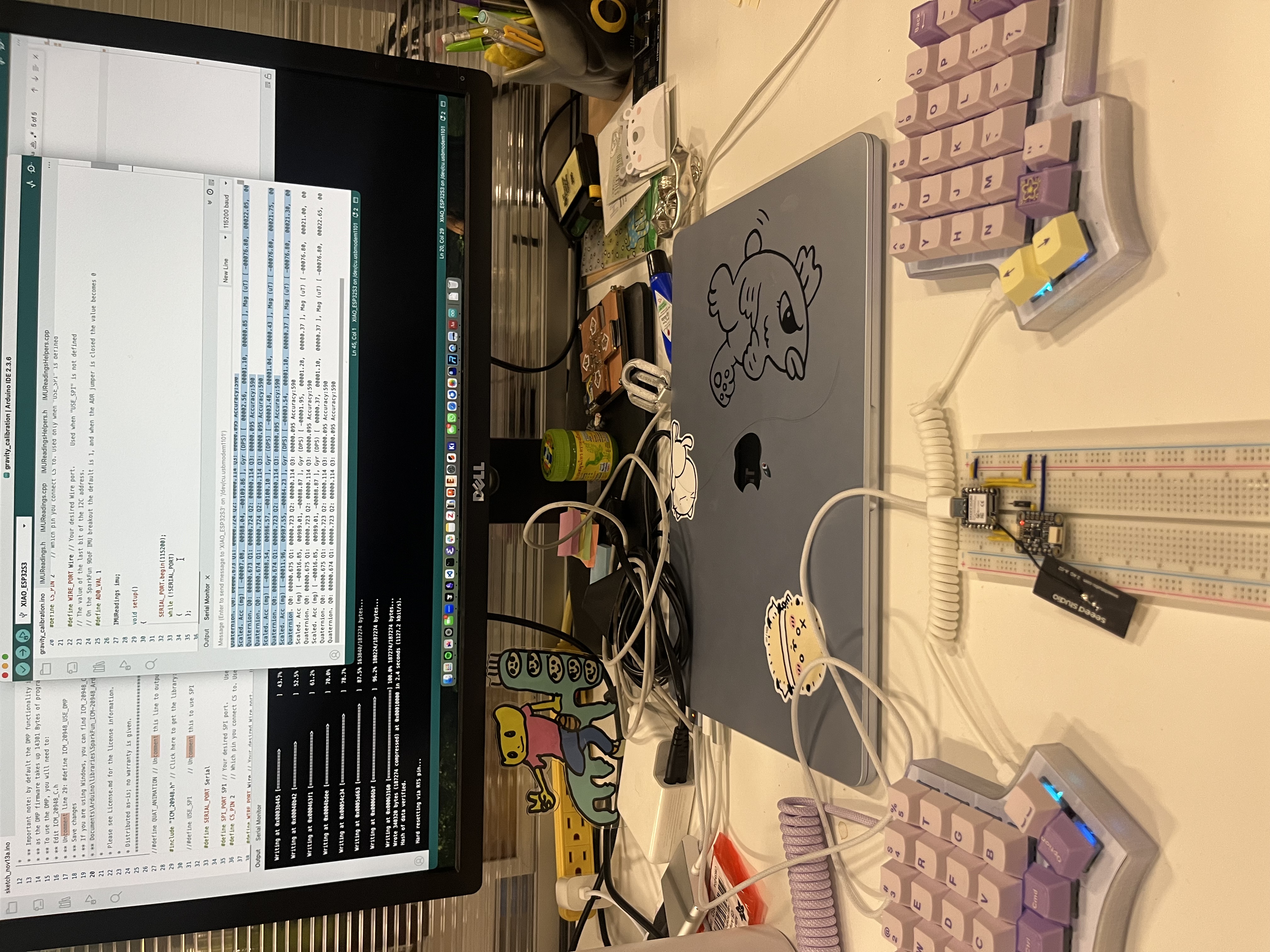

The whole team meets on the 5th floor and prepares. It's the first time we get access to a board with an IMU on it. Thanks EE team! We get familiar with the data coming out of the IMU by running a basic Adafruit example.

Sophia creates a first version of an HTML page that will act as a remote control for debugging later. Sun is building an API that will enable proper communication between the 2 pieces of the puzzle. We come up with an organizational structure of the code base that allows everyone to work on their own thing before integration will happen later.

We discuss the vector maths required to make the bot move correctly but also maintain a consistent forward axis in world space (despite its own tumbling). Yufeng has a first visualization of the IMU data.

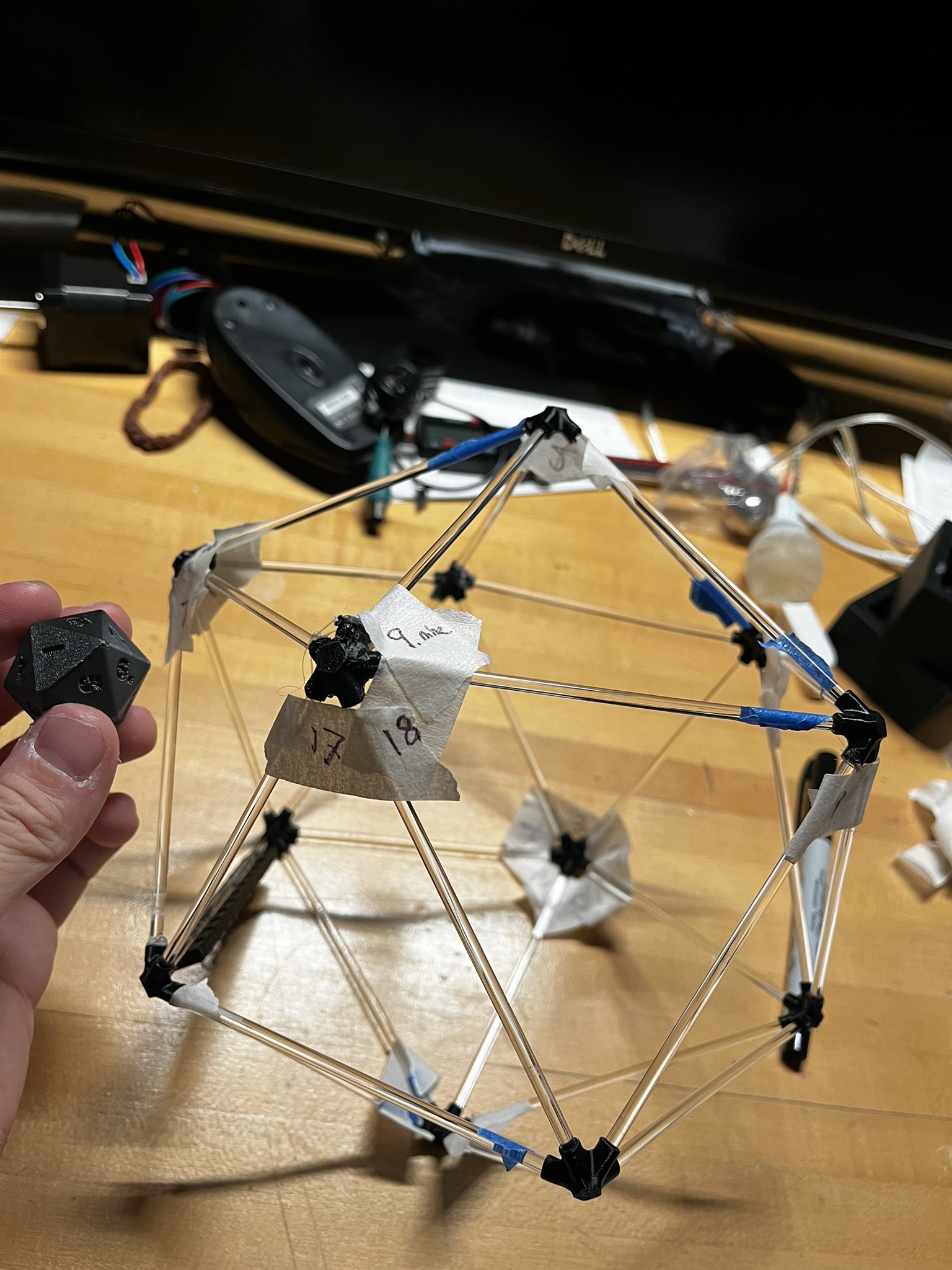

We have a quick conversation about how this thing will move. We decide that a naive algorithm for getting it to "take a step" forward is to actuate two faces adjacent to the face which is on the ground, to tip it onto the third neighboring face. Eitan had 3D-printed 20-sided dice for all of us, which we decided to use as a shared reference for how to refer to the faces. Miranda, Saetbyeol, and Eitan encode the face adjacencies into a JSON file which maps each face number to its neighbors. We generate unit normal vectors for each face of an arbtirary icosahedron. From these, Miranda and Saetbyeol implement a quick visualization for how to compute which faces to actuate given the face which is on the ground. In the below visualization, the face on the ground is colored in red, the face most closely aligned with the movement vector (greatest dot product between the face normal and the movement vector) is colored in yellow, and the two faces to actuate (the other two neighbors) are colored in green.

Thursday, 13th

Matti and Miranda look at 3d vector maths in the morning and figure out a (theoretical) prototype that should do the trick. It's based on a calibration step that the user initiates once the IMU sufficiently settles. The calibration step grabs the current quaternion coming out of the IMU, inverts it and stores it for later.

Saetbyeol tests the muxes and gets the servos to run! Miranda modifies an example to get the quaternion reading from the IMU.

Friday, 14th

Miranda refactors 3D maths code into a Python script instead of a Jupyter notebook. Matti writes a first version of the visualization code and the first in-browser implementation of the logic that figures out when to activate the triangles for a forward move.

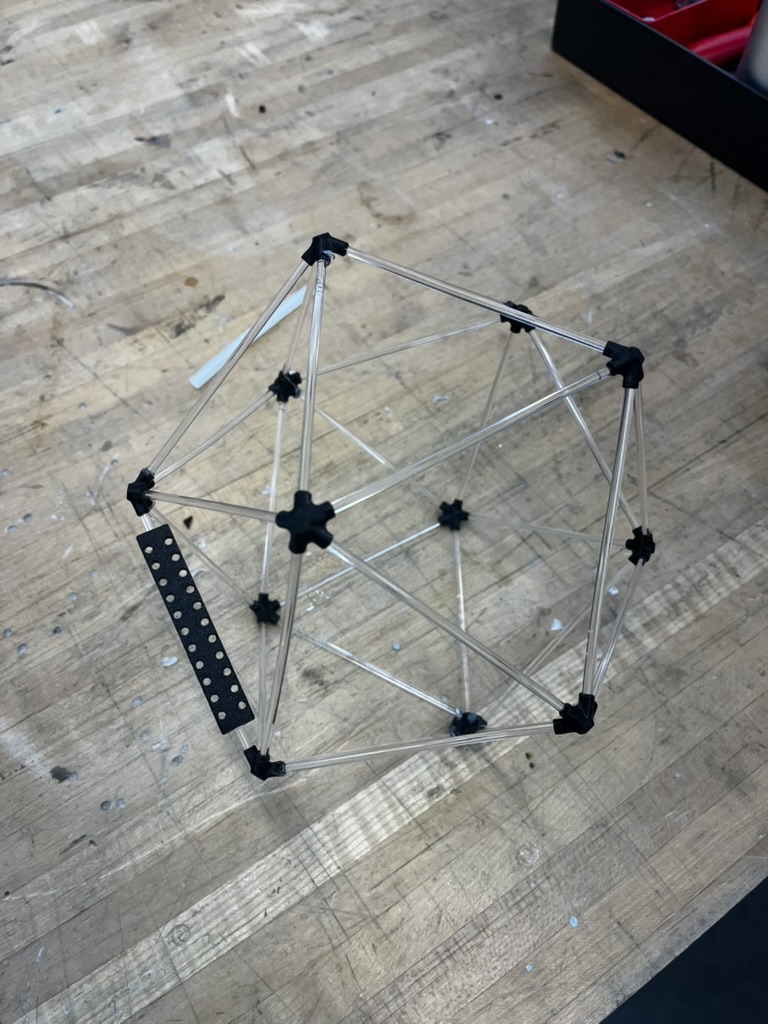

Yufeng starts making a miniature model of the shape we're working with.

Saturday, 15th

We cover the whiteboard in the fifth floor study room (which we have been affectionately referring to as the War Room) with diagrams and lists of all of the features we need. We have most of the functionality we need in bits and pieces, and we spend most of the day linking everything together and making interfaces for testing and debugging.

We discuss switching our remote control protocol from UDP to BLE because

- it makes it much easier to connect to the ball

- we observed latency issues when too many people were around (Sun did testing during StudCom tea) Matti provided some Micropython and JS code that he had written for one of the assignments to Miranda and she converted that over into our project (C++ and JS); we switched to using BLE.

Matti, Saetbyeol and Sun made some failing servo code work.

Sunday, 16th

In order to debug an issue where the BLE signal would sometimes brownout, we moved away from sending JSON strings over BLE and came up with a more compressed representation for sending commands. Our final command encoding sends 4 leading bits to encode the commands and 20 Discuss change of data stream from remote control to ESP32. Miranda implemented it. Miranda and Matti discussed the face -> [mux, channel] mapping with Ben and made a plan.

Monday, 17th

- We decide to split up the feature that handles both extension and retraction of the triangles. Going forward we're letting the JS code decide when to extend and retract instead of having that timing set on the ESP32. The reasoning here is that the JS code already acts as a brain and technically we should only retract the triangle once we can verify that we've landed on different triangle.

We successfully implemented servo movement to 270 degrees (had 180 before) with automatic retraction (timed on the ESP32). We also move our communication from the remote control in the browser to the ESP32 to a system that communicates data for the 32 mux channels (instead of triangle faces). This works great and will help with debuggon on Tuesday. We also set the respective pins on the breakout mux dev boards to align with the same I2C addresses that will be used on the final PCB. That allows us to keep our code static and not change I2C addresses when switching between testing on the bread boards and the milled PCBs.

We implement a sequencer that allows pre-planning steps/instructions. We anticipate that it might be helpful with filming if we get to a point where we want to film the icosphere moving multiple steps.

- Level 2 Remote: Added lvl2 remote interface with mapping to 32-channel representation (Matti Gruener)

- Code Quality: Major cleanup and code formatting with Prettier integration (Yufeng)

Tuesday, 18th

Some of us meet in the morning to do some late software changes in anticipation of a long day full of debugging. We focus on getting a lot of components in place that allow us to trigger parts of the system in isolation so that we have an easier time once we get access to the fully assembled system.

During assembly there are many tasks that we helped with on the software side. A short excerpt:

Tyler brought his new PCB and we helped verify that servos can be run off of all the servo ports he exposes. We also label the ports with him so we know which channels on the muxes are connected to these ports.

We have a setup that allows us to quickly zero out all the servos before assembling them. We need to make sure we know their current state befor putting them into the physical system. During assembly we notice that our min/max values don't align with how the mechanical team has set up the mechanism for extending the triangles. We switch these values in our code so that extension becomes retraction and vice versa.

We have problems with one servo not extending and retracting correctly. We have to change the max value we send for extension (from 520 to 512) because the servo doesn't react to 520. Other servos do. We don't fully understand, but this works. Dimitar helped with doing the math to derive the 512 value.

We debug the audio sub-system because we encounter some problems on Tyler's amazing spherical PCB.

CBA Machine - Integrated System Documentation

Table of Contents

- System Overview

- System Architecture

- Hardware Components

- Firmware (ESP32 Microcontroller)

- Web Interface (Browser)

- Libraries and Dependencies

- Setup and Installation

- Usage Instructions

- Questions and Unclear Aspects

System Overview

The CBA Machine is an interactive robotic sculpture featuring an icosahedron geometry with 20 servo-controlled faces and a 9-axis IMU sensor for orientation tracking. The system enables real-time motion sensing, servo actuation, and audio playback, all controlled wirelessly via Bluetooth Low Energy (BLE) from a web browser.

Key Features:

- Real-time IMU orientation tracking with quaternion output

- 20 independently controlled servos organized in icosahedron face structure

- Bluetooth Low Energy (BLE) wireless control from web browser

- 3D visualization of IMU orientation using Three.js

- Audio playback from SD card via I2S amplifier

- Command sequencing for automated demonstrations

- No WiFi required - operates entirely over BLE

Use Cases:

- Interactive art installation with motion-responsive behavior

- Demonstration of robotic actuation and sensor fusion

- Educational platform for understanding quaternion-based orientation

System Architecture

The system consists of three main components:

┌─────────────────────────────────────────────────────────────┐

│ Web Browser (Client) │

│ - Three.js 3D Visualization │

│ - BLE Communication (Web Bluetooth API) │

│ - Command Sequencer │

│ - User Interfaces (Operation & Debugging) │

└──────────────────────┬──────────────────────────────────────┘

│ BLE

│ Commands: 5-byte binary protocol

│ Data:

| - JSON (quaternion + accel) -> To browser

| - Bit Array (Actuation Commands) <- From browser

┌──────────────────────▼──────────────────────────────────────┐

│ ESP32-S3 Microcontroller │

│ - BLE Server

│ - IMU Data Processing (DMP Quaternion) │

│ - Servo Control (32 channels) │

│ - Audio Playback (SD Card + I2S) │

└──────┬────────┬─────────┬─────────────┬────────────────────┘

│ │ │ │

│ │ │ │

┌──────▼────┐ ┌─▼────────┐ ┌───▼─────┐ ┌▼─────────────┐

│ ICM-20948 │ │ 2x │ │ SD Card │ │ MAX98357A │

│ 9-DOF IMU │ │ PCA9685 │ │ Storage │ │ I2S Amplifier│

│ (I2C) │ │ PWM │ │ (SPI) │ │ │

└───────────┘ │ (I2C) │ └─────────┘ └──────┬───────┘

└────┬─────┘ │

│ │

┌────▼──────────────┐ ┌───────▼───────┐

│ 20 Servo Motors │ │ Speaker │

│ (Icosahedron │ └───────────────┘

│ Faces 1-20) │

└───────────────────┘

Data Flow:

- IMU → ESP32: ICM-20948 sends quaternion + accelerometer data via I2C

- ESP32 → Browser: JSON sensor data streamed via BLE notifications (10-50 Hz)

- Browser → ESP32: Binary commands (5 bytes) via BLE writes

- ESP32 → Servos: PWM signals via dual PCA9685 controllers (32 channels total, 20 in use)

- SD Card → ESP32 → Amplifier: WAV audio streaming via I2S

Hardware Components

Primary Components

| Component | Model/Type | Interface | Purpose |

|---|---|---|---|

| Microcontroller | Seeed Studio XIAO ESP32S3 | - | Main control unit with BLE |

| IMU Sensor | ICM-20948 (9-DOF) | I2C (address 0x68/0x69) | Orientation tracking |

| PWM Controllers | 2x PCA9685 (16-channel each) | I2C (0x48, 0x60) | 20 servo channels (total) |

| Servo Motors | 20x SunFounder Digital Servo | PWM | Icosahedron face actuation |

| Audio Amplifier | MAX98357A | I2S | Audio playback |

| Storage | SD Card | SPI | Audio file storage |

| Speaker | Standard 8Ω | Analog | Audio output |

Pin Configuration (ESP32-S3)

I2C Bus (IMU + PWM Controllers):

- SDA: Default XIAO-ESP32S3 I2C SDA

- SCL: Default XIAO-ESP32S3 I2C SCL

- Clock: 400 kHz

I2S Audio (MAX98357A):

- BCLK: D1

- LRCK (WS): D0

- DIN: D2

SPI (SD Card):

- CS: Pin 21

- Other pins: Default SPI pins

Power:

- Servos: External power supply (6V)

- ESP32: USB or external 5V (through buck converter)

- PWM Controllers: Powered via I2C bus (5V)

Firmware (ESP32 Microcontroller)

The firmware is organized into modular .ino files, with controller.ino as the main entry point.

File Structure

controller/

├── controller.ino # Main program entry point

├── config.h # System configuration

├── bluetooth-name.h # BLE device name (gitignored)

├── bluetooth-name.h.example # Template for BLE name

├── servo-params.h # Servo PWM parameters

├── lib-01-ble.ino # BLE communication

├── lib-02-ble-message-handler.ino # Command parser

├── lib-03-sensor.ino # IMU sensor reading

├── lib-04-handle-move-servo.ino # Servo control

├── lib-05-handle-reset.ino # Device reset handler

├── lib-06-handle-sensor-transmission.ino # (Possibly deprecated)

└── lib-07-audio.ino # Audio playback

IMU Sensor Subsystem

File: lib-03-sensor.ino

Overview

The IMU subsystem uses the SparkFun ICM-20948 library to read orientation data from the 9-axis IMU sensor. The system leverages the sensor's Digital Motion Processor (DMP) to compute quaternion orientation in hardware, reducing CPU load on the ESP32.

Key Features

- Quaternion output: Direct quaternion computation via DMP (no Euler angle conversion)

- Accelerometer data: Raw acceleration in milli-g (mg)

- High sample rate: Configured for maximum DMP output rate (approx 225 Hz)

- FIFO buffering: Hardware FIFO prevents data loss during BLE transmission

Configuration

DMP Setup (Required): The DMP feature must be manually enabled in the library source code:

- Edit:

~/Arduino/libraries/SparkFun_ICM-20948_ArduinoLibrary/src/util/ICM_20948_C.h - Uncomment this line:

#define ICM_20948_USE_DMP - Recompile the library

I2C Configuration:

- Address: AD0 = 1 (address 0x69)

- Clock speed: 400 kHz

- Bus: Wire (default I2C bus)

Data Structures

struct Quaternion {

float w; // q0 (scalar component)

float x; // q1 (i component)

float y; // q2 (j component)

float z; // q3 (k component)

uint16_t accuracy; // Heading accuracy estimate

};

struct Vector3D {

float x; // Acceleration X (mg)

float y; // Acceleration Y (mg)

float z; // Acceleration Z (mg)

};

Key Functions

void setupSensor()

- Initializes I2C bus at 400 kHz

- Connects to ICM-20948 at address 0x69

- Configures DMP for quaternion output

- Sets maximum ODR (Output Data Rate)

- Enables FIFO buffering

- Retries up to 10 times if initialization fails

void updateSensorData()

- Reads latest data from DMP FIFO

- Updates quaternion and accelerometer values

- Must be called before

getSensorJSON()

String getSensorJSON()

- Returns JSON string with format:

{ "w": 0.9966, "x": 0.0368, "y": 0.0132, "z": 0.0718, "ax": 5.13, "ay": 15.63, "az": 997.31 }

bool hasMoreSensorData()

- Returns

trueif FIFO contains additional samples - Used to minimize delays when data is available

DMP Configuration Details

The DMP is configured with:

- Sensor:

INV_ICM20948_SENSOR_ORIENTATION(9-axis quaternion) - ODR: 0 (maximum rate, ~225 Hz)

- FIFO: Enabled for buffering

- Output: 9-axis quaternion (accelerometer + gyro + magnetometer fusion)

Error Handling

- Initialization failures: Retries up to 10 times with 500ms delays

- Status checking: Uses

ICM_20948_Status_efor error detection - FIFO overflow: Automatically handled by DMP

Coordinate System

The IMU reports data in its local coordinate system:

- X-axis: Forward

- Y-axis: Left

- Z-axis: Up

Coordinate transformations are applied in the web interface (see brains.js).

Servo Control Subsystem

Files: lib-04-handle-move-servo.ino, servo-params.h

Overview

The servo subsystem controls 20 independent servo motors arranged as faces of an icosahedron. Two PCA9685 PWM controllers (16 channels each) provide the necessary I/O expansion.

Hardware Architecture

ESP32 I2C Bus

│

├── PCA9685 #1 (0x48) ─── Servos 0-15 (Faces 1-16)

│

└── PCA9685 #2 (0x60) ─── Servos 16-19 (Faces 17-20)

Servo Parameters

File: servo-params.h

#define SERVO_MIN 100 // Retracted position (PWM value)

#define SERVO_MAX 520 // Extended position (PWM value)

#define SERVO_FREQ 50 // PWM frequency (Hz)

Servo Model: SunFounder Digital Servo

PWM Range: 100-520 (corresponds to ~0-180° rotation)

Key Functions

void setupServo()

- Initializes I2C bus via

Wire.begin() - Configures both PCA9685 controllers:

- Sets oscillator frequency to 27 MHz

- Sets PWM frequency to 50 Hz

- Applies 10ms stabilization delay

void servo_write(int channel, int pwmVal)

- Parameters:

channel: 0-19 (servo channel)pwmVal: 100-520 (PWM pulse width)

- Behavior:

- Channels 0-15 → PCA9685 #1 (0x48)

- Channels 16-19 → PCA9685 #2 (0x60), mapped to 0-3

void reset_servo_positions()

- Retracts all 20 servos to

SERVO_MINposition - Called during system initialization

Command Handling

Servo commands are received as 5-byte binary messages parsed in lib-02-ble-message-handler.ino:

Extend Servo:

- Command bits:

0b0001 - Data bits: 32-bit bitmask (20 bits used for 20 servos)

- Action: Sets specified servos to

SERVO_MAX

Retract Servo:

- Command bits:

0b0010 - Data bits: 32-bit bitmask (20 bits used for 20 servos)

- Action: Sets specified servos to

SERVO_MIN

Example: To extend servos 1, 5, and 10:

- Face IDs: 1, 5, 10

- Bit indices: 0, 4, 9

- Bitmask:

0x00000211(bits 0, 4, 9 set)

Calibration Considerations

⚠️ IMPORTANT: Servo min/max values may need adjustment per servo. See Questions section.

Networking/BLE Subsystem

Files: lib-01-ble.ino, lib-02-ble-message-handler.ino

Overview

The BLE subsystem implements a Nordic UART Service (NUS) for bidirectional communication with a web browser using the Web Bluetooth API.

BLE Configuration

Device Name:

- Default:

"ESP32-Labubu" - Customizable via

bluetooth-name.h(gitignored)

Service UUID:

6e400001-b5a3-f393-e0a9-e50e24dcca9e(Nordic UART Service)

Characteristics:

TX Characteristic (ESP32 → Browser):

- UUID:

6e400003-b5a3-f393-e0a9-e50e24dcca9e - Property: NOTIFY

- Purpose: Stream sensor data as JSON

- UUID:

RX Characteristic (Browser → ESP32):

- UUID:

6e400002-b5a3-f393-e0a9-e50e24dcca9e - Property: WRITE

- Purpose: Receive commands as 5-byte binary

- UUID:

Communication Protocol

ESP32 → Browser (Sensor Data)

Format: JSON strings sent via BLE notifications

{ "w": 0.875, "x": 0.0243, "y": 0.0393, "z": -0.482, "ax": 17.5781, "ay": 3.1738, "az": 1025.3906 }

Rate: 10-50 Hz (depending on FIFO availability)

Browser → ESP32 (Commands)

Format: 5-byte binary encoding

Byte Layout:

┌─────────┬─────────┬─────────┬─────────┬─────────┐

│ Byte 0 │ Byte 1 │ Byte 2 │ Byte 3 │ Byte 4 │

├─────────┼─────────┼─────────┼─────────┼─────────┤

│ CCCC │ DDDDDDDD│ DDDDDDDD│ DDDDDDDD│ DDDD0000│

│ DDDD │ │ │ │ │

└─────────┴─────────┴─────────┴─────────┴─────────┘

↑ ↑ ↑

│ └── Data bits [0:3] └── Data bits [28:31]

└────── Command bits [0:3]

Total: 4-bit command + 32-bit data

Command Encodings:

| Command | Binary | Data Field | Description |

|---|---|---|---|

extend_servo |

0b0001 |

32-bit bitmask (20 bits used) | Extend specified servos |

retract_servo |

0b0010 |

32-bit bitmask (20 bits used) | Retract specified servos |

play_audio |

0b0100 |

20-bit audio ID | Play audio file |

reset |

0b1000 |

(unused) | Restart ESP32 |

Example: Extend servos 1, 2, 3

- Command:

0b0001 - Data:

0x00000007(bits 0, 1, 2 set) - Bytes:

[0x71, 0x00, 0x00, 0x00, 0x00]

BLE Functions

void setupBLE()

- Initializes BLE device with name

- Creates Nordic UART Service

- Configures TX/RX characteristics

- Starts advertising

void sendBLEMessage(String message)

- Sends string via BLE notification

- Used for sensor data streaming

bool hasBLEMessage()

- Checks if command received

- Returns

trueif 5-byte message available

void getBLEMessage(uint8_t* data, size_t& len)

- Retrieves received command

- Copies to buffer and clears flag

void handleBLEConnection()

- Manages connection state

- Restarts advertising on disconnect

Error Handling

- Connection loss: Auto-restart advertising after 500ms

- Invalid message length: Logs error, discards message

- Unknown commands: Logs error, continues operation

Audio Subsystem

File: lib-07-audio.ino

Overview

The audio subsystem streams 16-bit PCM WAV files from an SD card to a MAX98357A I2S amplifier for playback through a speaker.

Hardware Components

| Component | Interface | Pins |

|---|---|---|

| SD Card Reader | SPI | CS: Pin 21 |

| MAX98357A Amplifier | I2S | BCLK: D1, LRCK: D0, DIN: D2 |

Audio Format Requirements

- Format: WAV (RIFF header)

- Encoding: PCM (uncompressed)

- Bit depth: 16-bit

- Sample rate: Any (dynamically configured)

- Channels: Mono or stereo (converted to mono)

File Organization

Audio files must be stored in /audio/ directory on SD card:

/audio/

├── audio_0000_filename.wav

├── audio_0001_another.wav

├── audio_0042_example.wav

└── ...

Naming Convention: audio_XXXX*.wav

XXXX: Zero-padded 4-digit ID (0000-9999)- Suffix after ID: Optional (ignored)

Key Functions

void setupAudio()

- Initializes SD card via SPI

- Checks for SD mount success

- Does NOT initialize I2S (done during playback)

void playAudioById(uint32_t audioId)

- Parameter: Audio ID (0-1048575, but files use 0-9999)

- Behavior:

- Searches

/audio/for file matchingaudio_{ID:04d}*.wav - Opens file and validates WAV header

- Initializes I2S with sample rate from WAV header

- Starts playback (non-blocking)

- Searches

void playAudio()

- Called from main loop

- Streams audio data in chunks (4096 bytes)

- Applies volume gain (32.0x multiplier)

- Clamps samples to prevent clipping

- Auto-stops when file ends

bool initI2S(uint32_t sampleRate)

- Configures I2S for specified sample rate

- Settings:

- Mode: Master TX

- Bits per sample: 16-bit

- Channel format: RIGHT only (mono)

- DMA buffers: 8 buffers × 512 bytes

- APLL: Enabled for accurate clock

- Uninstalls previous I2S driver before reinitializing

I2S Configuration Details

i2s_config_t config = {

.mode = I2S_MODE_MASTER | I2S_MODE_TX,

.sample_rate = <from WAV file>,

.bits_per_sample = I2S_BITS_PER_SAMPLE_16BIT,

.channel_format = I2S_CHANNEL_FMT_ONLY_RIGHT,

.communication_format = I2S_COMM_FORMAT_I2S_MSB,

.intr_alloc_flags = ESP_INTR_FLAG_LEVEL1,

.dma_buf_count = 8,

.dma_buf_len = 512,

.use_apll = true, // Precise clock

.tx_desc_auto_clear = true,

.fixed_mclk = 0

};

Volume Control

Current Implementation:

- Fixed gain:

32.0f(hardcoded inplayAudio()) - Applied as multiplication:

sample = sample * 32.0 - Clamping: Prevents overflow (limits to ±32767)

Modification:

To change volume, edit volumeGain variable in lib-07-audio.ino.

Command Integration

Audio playback is triggered via BLE command:

- Command:

play_audio - Command bits:

0b0100 - Data: Lower 20 bits = audio ID (0-1048575)

Example: Play audio file 42

- Command:

play_audio - Data:

0x00002A(42 in hex) - Finds:

/audio/audio_0042*.wav

Error Handling

- SD card mount failure: Logged, system continues

- File not found: Logged, playback not started

- Invalid WAV format: Logged, file closed

- I2S write failure: Stops playback, closes file

Main Loop Architecture

File: controller.ino

Setup Sequence

void setup() {

Serial.begin(115200); // Debug output

setupBLE(); // Initialize BLE

setupSensor(); // Initialize IMU

setupServo(); // Initialize servos

setupAudio(); // Initialize audio

reset_servo_positions(); // Retract all servos

}

Main Loop

void loop() {

// 1. Handle BLE connection state

handleBLEConnection();

// 2. Read IMU sensor

updateSensorData();

// 3. Send sensor data if connected

sendSensorDataIfReady();

// 4. Process incoming commands

if (hasBLEMessage()) {

uint8_t data[5];

size_t len;

getBLEMessage(data, len);

if (len == 5) {

handleBLECommand(data, len);

}

}

// 5. Stream audio if playing

playAudio();

// 6. Delay only if no more sensor data

if (!hasMoreSensorData()) {

delay(10); // 100 Hz loop when idle

}

}

Loop Timing Strategy

The loop uses adaptive timing:

- No delay when IMU FIFO has data (high-speed streaming)

- 10ms delay when FIFO empty (prevents CPU spinning)

- Typical rate: 10-50 Hz depending on sensor activity

Web Interface (Browser)

Location: software/integration/server/

Overview

The web interface provides real-time 3D visualization, BLE control, and command sequencing capabilities using vanilla JavaScript and Three.js.

Architecture

index.html

│

├── scheduler.js # Async task queue

├── bluetooth.js # BLE communication

├── threejs-vis.js # 3D scene setup

├── brains.js # IMU processing & face detection

├── calibration.js # IMU calibration

├── acceleration.js # Acceleration vector visualization

├── faceneighbors.js # Face topology data

├── cmd_sequencer.js # Command sequencer UI

├── utils.js # Utility functions

└── voice.js # Voice commands (optional)

Key Modules

bluetooth.js

Purpose: BLE communication layer

Key Functions:

BLE.connect(dataCallback); // Connect to ESP32

BLE.disconnect(); // Disconnect

BLE.send(cmd, args); // Send command (5-byte encoding)

BLE.sendCommand(cmd, args); // High-level command API

BLE.isConnected(); // Check connection status

Command Encoding:

function encodeCommand(cmd, args) {

// Returns Uint8Array(5) with binary encoding

// See "Networking/BLE Subsystem" for format

}

Features:

- Automatic command queueing (via

AsyncTaskScheduler) - JSON data parsing and IMU visualization callback

- Auto-reconnection handling

- Connection state events

threejs-vis.js

Purpose: 3D visualization of IMU orientation

Features:

- Loads icosahedron model (GLB format)

- Renders face normals (blue lines)

- Highlights down-facing face (yellow)

- Shows neighbor faces (green/orange)

- Orthographic camera with orbit controls

- Coordinate axis labels

Key Functions:

initThreeJS(); // Initialize scene

updateIMURotationData(x, y, z, w); // Apply quaternion rotation

brains.js

Purpose: IMU data processing and face detection logic

Features:

- Quaternion-based rotation

- Coordinate system transformation (IMU → Three.js)

- Calibration support (nulls out initial orientation)

- Down-facing face detection (gravity alignment)

- Movement-aligned neighbor detection

Key Functions:

updateIMURotationData(x, y, z, w, ax, ay, az);

findDownTriangle(); // Detect lowest face

findMovementAlignedNeighbor(); // Find motion-aligned faces

getGreenNeighbors(); // Get neighbor face IDs

Face Detection Logic:

- Transform quaternion to Three.js coordinate system

- Apply calibration offset (if calibrated)

- Find face normal most aligned with world down (-Y axis)

- Highlight face yellow (threshold: 0.7 dot product)

- Find neighbor most aligned with movement vector

- Highlight best neighbor orange, others green

cmd_sequencer.js

Purpose: Command sequence builder and executor

Features:

- Add/remove/reorder commands

- Set delays between commands

- Import/export sequences (text format)

- Real-time execution with visual feedback

- Error recovery (continue on failure)

Command Types:

extend_servo: Extend specified servosretract_servo: Retract specified servosmove_servo: Extend → Wait → Retractreset: Restart ESP32

Sequence File Format:

# Comment line

move_servo 1,2,3 1000 # Command Args Delay(ms)

reset 500

move_servo 5 2000

calibration.js

Purpose: IMU orientation calibration

Features:

- Stores current orientation as reference

- Computes inverse quaternion

- Applies offset to future rotations

- Nulls out initial tilt

Usage:

- Position device in desired "neutral" orientation

- Click "Calibrate" button

- All future rotations relative to calibration pose

scheduler.js

Purpose: Async task queue for sequential BLE writes

Features:

- Ensures one BLE write at a time (prevents race conditions)

- Queue-based execution

- Error handling (failed tasks don't stop queue)

- Clear all pending tasks

Why Needed:

BLE writeValue() calls must be sequential. Concurrent writes cause failures.

UI Elements

Main Controls:

- Connect Button: Initiates BLE pairing

- Disconnect Button: Closes BLE connection

- Calibrate Button: Sets IMU reference orientation

- Reset ESP32 Button: Restarts device

Command Sequencer Panel:

- Add Command: Create new command entry

- Run Sequence: Execute all commands in order

- Stop Sequence: Halt execution

- Upload/Download: Import/export sequence files

- Command List: Drag-to-reorder, edit inline

3D Viewport:

- Left-click + drag: Rotate camera

- Scroll wheel: Zoom

- Color coding:

- Yellow: Down-facing face

- Orange: Movement-aligned neighbor

- Green: Other neighbors

- Gray: Inactive faces

Deployment

Local Testing:

cd software/integration/server

npx http-server -p 8080

# Open http://localhost:8080

Production (GitLab Pages):

https://classes.pages.cba.mit.edu/863.25/CBA/cba-machine/index.html

Requirements:

- Modern browser: Chrome, Edge, Opera (Web Bluetooth API)

- HTTPS or localhost (secure context required)

Libraries and Dependencies

ESP32 Firmware

Arduino Platform

Installation: Follow Espressif Arduino-ESP32 installation guide

| Platform | Version | Path |

|---|---|---|

| esp32:esp32 | 3.3.1 | ~/.arduino15/packages/esp32/hardware/esp32/3.3.1 |

Board Selection: "XIAO_ESP32S3" (Seeed Studio XIAO ESP32S3)

Arduino Libraries

| Library | Version | Installation Method | Path |

|---|---|---|---|

| SparkFun ICM-20948 | 1.3.2 | Arduino Library Manager | ~/Arduino/libraries/SparkFun_9DoF_IMU_Breakout_-_ICM_20948_-_Arduino_Library |

| Adafruit PWM Servo Driver | (Latest) | Arduino Library Manager | ~/Arduino/libraries/Adafruit_PWM_Servo_Driver_Library |

| BLE | 3.3.0 | Included with ESP32 platform | ~/.arduino15/packages/esp32/hardware/esp32/3.3.1/libraries/BLE |

| Wire | 3.3.0 | Included with ESP32 platform | ~/.arduino15/packages/esp32/hardware/esp32/3.3.1/libraries/Wire |

| SPI | 3.3.0 | Included with ESP32 platform | ~/.arduino15/packages/esp32/hardware/esp32/3.3.1/libraries/SPI |

| SD | (Included) | Part of ESP32 core | (Built-in) |

| FS | (Included) | Part of ESP32 core | (Built-in) |

Critical Setup Step for ICM-20948:

⚠️ MUST ENABLE DMP SUPPORT

Edit the library header file:

# Linux/Mac

nano ~/Arduino/libraries/SparkFun_9DoF_IMU_Breakout_-_ICM_20948_-_Arduino_Library/src/util/ICM_20948_C.h

# Windows

# Open: Documents\Arduino\libraries\SparkFun_9DoF_IMU_Breakout_-_ICM_20948_-_Arduino_Library\src\util\ICM_20948_C.h

Uncomment line 29:

#define ICM_20948_USE_DMP

Verification: After editing, recompile the controller sketch. If successful, you'll see quaternion data streaming.

Node.js (Development Tools)

File: software/integration/package.json

{

"dependencies": {

"ws": "^8.18.3" // WebSocket server (for optional features)

},

"devDependencies": {

"concurrently": "^9.2.1" // Parallel command execution

}

}

Installation:

cd software/integration

npm install

NPM Scripts:

npm run build:controller # Compile firmware

npm run upload:controller # Upload to ESP32 (/dev/ttyACM0)

npm run monitor # Serial monitor (115200 baud)

npm run dev:controller # Build + Upload + Monitor

npm run serve # Start web server

Web Interface (Browser)

External Dependencies (CDN):

- Three.js (r128+): 3D rendering library

- OrbitControls: Camera control extension for Three.js

Loaded via HTML:

<script src="https://cdnjs.cloudflare.com/ajax/libs/three.js/r128/three.min.js"></script>

<script src="https://cdn.jsdelivr.net/npm/three@0.128.0/examples/js/controls/OrbitControls.js"></script>

No Build Process Required: All code is vanilla JavaScript.

Setup and Installation

1. Hardware Assembly

Components Needed:

- Seeed Studio XIAO ESP32S3 board

- ICM-20948 breakout board

- 2× PCA9685 16-channel PWM controllers

- 20× SunFounder digital servos

- MAX98357A I2S amplifier

- SD card with reader

- Speaker (8Ω, power TBD)

- External power supply for servos

Wiring:

I2C Bus (shared):

- ICM-20948 → ESP32 (I2C address 0x69)

- PCA9685 #1 → ESP32 (I2C address 0x48)

- PCA9685 #2 → ESP32 (I2C address 0x60)

I2S Audio:

- MAX98357A BCLK → ESP32 D1

- MAX98357A LRCK → ESP32 D0

- MAX98357A DIN → ESP32 D2

SPI (SD Card):

- SD CS → ESP32 Pin 21

- SD MOSI/MISO/SCK → Default SPI pins

Servos:

- Connect to PCA9685 channels 0-19 (16 on first controller, 4 on second)

- Use external power supply for servo power rails

2. Firmware Setup

A. Install Arduino IDE and ESP32 Support

- Download Arduino IDE 2.x from arduino.cc

- Install ESP32 board support:

- Open Arduino IDE

- Go to

File > Preferences - Add to "Additional Board Manager URLs":

https://espressif.github.io/arduino-esp32/package_esp32_index.json - Go to

Tools > Board > Boards Manager - Search "esp32" and install "esp32 by Espressif Systems" (v3.3.1)

B. Install Required Libraries

- Open

Tools > Manage Libraries - Install:

- "SparkFun ICM-20948" by SparkFun Electronics (v1.3.2)

- "Adafruit PWM Servo Driver" by Adafruit

C. Enable DMP Support (Critical!)

# Linux/Mac

nano ~/Arduino/libraries/SparkFun_9DoF_IMU_Breakout_-_ICM_20948_-_Arduino_Library/src/util/ICM_20948_C.h

# Uncomment line 29:

#define ICM_20948_USE_DMP

D. Configure Firmware

- Open

software/integration/controller/controller.ino - (Optional) Create

bluetooth-name.h:cd software/integration/controller cp bluetooth-name.h.example bluetooth-name.h # Edit bluetooth-name.h to customize BLE name

E. Compile and Upload

- Select board:

Tools > Board > esp32 > XIAO_ESP32S3 - Select port:

Tools > Port > /dev/ttyACM0(or your port) - Click "Upload" button

- Wait for upload to complete

- Open Serial Monitor (115200 baud) to verify operation

Verify Success:

- Serial monitor shows "IMU initialized successfully!"

- Serial monitor shows "BLE initialized and advertising as 'ESP32-Labubu'"

- No error messages about sensor initialization

3. Web Interface Setup

A. Local Development

cd software/integration/server

npx http-server -p 8080

Open browser to http://localhost:8080

B. Production Deployment (GitLab Pages)

Already deployed at:

https://classes.pages.cba.mit.edu/863.25/CBA/cba-machine/index.html

No additional setup needed for production use.

4. Audio Setup (Optional)

A. Prepare SD Card

- Format SD card as FAT32

- Create directory:

/audio/ - Add WAV files with naming convention:

/audio/audio_0000_intro.wav /audio/audio_0001_beep.wav /audio/audio_0042_melody.wav

Requirements:

- Format: WAV (RIFF)

- Encoding: 16-bit PCM

- Channels: Mono or Stereo

- Sample rate: Any (16kHz-48kHz recommended)

B. Insert SD Card

Insert SD card into ESP32 SD reader (CS on Pin 21)

Usage Instructions

Basic Operation

1. Power On System

- Connect ESP32 to USB or external power

- Wait for BLE advertising (check Serial Monitor)

2. Connect from Browser

- Open web interface (localhost or GitLab Pages)

- Click "Connect to ESP32"

- Select "ESP32-Labubu" from pairing dialog

- Wait for "Connected" status

3. Observe IMU Visualization

- 3D icosahedron should appear

- Rotate physical device → 3D model rotates in real-time

- Down-facing face highlighted in yellow

4. Calibrate Orientation

- Position device in desired neutral orientation

- Click "Calibrate" button

- Future rotations now relative to this position

5. Control Servos

Manual Control:

- Enter face IDs (1-20) in command field

- Click "Extend Servo" or "Retract Servo"

- Example:

1,5,10extends faces 1, 5, and 10

Command Sequencer:

- Add commands to sequence

- Set delays between commands

- Click "Run Sequence" for automated execution

6. Play Audio

- Use

play_audiocommand with audio ID - Example: ID

42plays/audio/audio_0042*.wav

Advanced Features

Command Sequencing

Purpose: Create repeatable demonstrations with precise timing

Steps:

- Click "Command Sequencer" tab

- Select command type (move_servo, reset, etc.)

- Enter arguments (face IDs)

- Set delay (milliseconds)

- Click "Add Command"

- Repeat to build sequence

- Click "Run Sequence" to execute

Example Sequence:

move_servo 1,2,3 2000 # Move faces 1-3, wait 2s

move_servo 4,5,6 2000 # Move faces 4-6, wait 2s

reset 0 # Restart device

Import/Export:

- Save sequences as

.txtfiles - Share sequences with team members

- Load saved sequences via "Upload" button

Voice Commands (Experimental)

File: voice.js

Some voice command functionality may be present. See code comments for details.

Face Neighbor Detection

The system automatically detects:

- Yellow face: Currently down-facing (gravity-aligned)

- Orange face: Neighbor most aligned with movement direction

- Green faces: Other neighbors of down-facing face

Use Case: Programmatic selection of faces to actuate based on orientation and motion.

Troubleshooting

Problem: "ESP32 not found" during connection

- Solution: Ensure ESP32 powered on and BLE advertising

- Check Serial Monitor for "BLE initialized" message

- Restart ESP32 if needed

Problem: IMU data not streaming

- Solution: Verify DMP support enabled (see Installation step 2C)

- Check Serial Monitor for IMU errors

- Verify I2C wiring

Problem: Servos not responding

- Solution: Check external power supply connected

- Verify servo power rails on PCA9685 boards

- Check I2C addresses (0x48, 0x60)

Problem: Audio playback silent or distorted

- Solution: Check SD card formatted as FAT32

- Verify WAV format (16-bit PCM only)

- Adjust

volumeGaininlib-07-audio.ino - Check I2S wiring to MAX98357A

Problem: BLE disconnects frequently

- Solution: Reduce distance to ESP32

- Minimize interference (WiFi routers, etc.)

- Check battery power if using battery

Questions and Unclear Aspects

The following aspects require clarification for proper documentation

Hardware Questions

Servo Power Supply Specifications

- What voltage are the servos powered from?

- What is the current draw per servo?

- Total system current draw with all servos active?

- Recommended power supply model/rating?

Physical Mounting

- How are servos mounted to icosahedron faces?

- What is the mechanical linkage design?

- Are there any mechanical limits or endstops?

PCB Design

- Is there a custom PCB, or is this breadboarded?

- If PCB exists, where are the design files?

- What connectors are used for servos?

Servo Calibration

- Do all servos use the same PWM min/max values?

- Is per-servo calibration needed?

- Where should calibration data be stored?

Software Questions

Face Numbering Convention

- How are faces numbered (1-20)?

- What is the relationship between face ID and servo channel?

- Is there a diagram showing face numbering on the physical model?

IMU Mounting Orientation

- What is the physical orientation of the IMU?

- Which axis points "up" when device is upright?

- Are the coordinate transformations in

brains.jscorrect?

Movement Vector

- What is the "movement vector" conceptually?

- How should it be controlled by the user?

- Is it derived from accelerometer data or user input?

Audio File Naming

- Why is the audio ID limited to 20 bits (1,048,575) but files use 4 digits (9,999)?

- Should the naming convention be changed to support higher IDs?

- Are there existing audio files, and what are their purposes?

Command Timing

- What is the optimal delay for

move_servocommand? (Currently 2000ms) - Should this be configurable per-command or per-servo?

- What are the mechanical constraints on servo speed?

- What is the optimal delay for

Integration Questions

Testing Procedures

- What tests should be run before deployment?

- Are there automated test sequences?

- What are the expected outputs for each test?

Safety Features

- Are there any safety limits (max current, temperature)?

- Should there be emergency stop functionality?

- What happens if servos stall or bind?

Power Management

- Battery vs. wall power?

- Battery life expectations?

- Low-battery warnings?

Deployment Environment

- Indoor vs. outdoor use?

- Temperature/humidity constraints?

- Expected runtime duration?

Version History

- When was this system last modified?

- What changes were made in recent updates?

- Are there breaking changes from previous versions?

Documentation Questions

Original Design Intent

- What was the original use case?

- What demonstrations or behaviors were intended?

- Are there videos of the system in operation?

Known Issues

- Are there any known bugs or limitations?

- What features are incomplete or experimental?

- What features are deprecated?

Future Plans

- Are there planned improvements?

- What features are on the roadmap?

- Is this project actively maintained?

Missing Documentations

Face Neighbors Data

- What is the format of

face_neighbors.json? - How was this file generated?

- Does it match the physical geometry?

- What is the format of

Example Sequences

- What is inside

example_sequence_17_18.txt? - Are there other example sequences?

- What do the sequence numbers mean?

- What is inside

Postscript

This skeleton of this documentation was generated with the following prompt

Carefully analyze the #file:integration folder to understand how the system works. Then produce a detailed documentation in #file:README.md. Make sure to organize the documentation based on system components:

- IMU Sensor

- Servo

- Networking

- Audio

- Main loop

Also, document the libraries we used (e.g. IMU, Servo, Audio, BLE).

The goal is to make sure the project can be reproduced by future engineers.

During documentation process, collect a list of questions that are unclear from the code artifacts. We will ask people to provide more information.